Ethical AI by design embeds governance, compliance, and responsibility into AI development workflows.

Ethical AI by design is the practice of embedding AI ethics, accountability, and governance principles directly into the architecture, development, and deployment of AI systems. It goes beyond post-hoc audits by making trustworthy AI, explainable AI, and AI transparency foundational, not optional. This proactive approach ensures that AI systems, especially agentic and autonomous ones, align with enterprise values, adhere to regulatory compliance, and deliver socially responsible outcomes across real-world applications.

Detailed Definition & Explanation

Ethical AI by design is a development paradigm that integrates ethical principles, such as fairness, transparency, safety, and accountability, directly into the technical and operational lifecycle of AI systems. Unlike reactive approaches that attempt to correct bias or non-compliance after deployment, ethical AI by design ensures that responsible AI implementation is embedded at every stage, from data selection to model training, deployment, and post-decision auditing. It aligns with enterprise goals for AI governance, AI regulatory compliance, and long-term trust, especially in high-impact domains like finance, education, and healthcare.

This approach enables organizations to reduce reputational risk, ensure AI safety and reliability, and comply with a growing body of ethical standards, such as the EU AI Act, OECD AI Principles, and NIST AI Risk Management Framework. Agentic AI systems, which are capable of autonomous decisions, require even deeper integration of AI ethics and compliance mechanisms, making the “by design” approach critical for enterprise scalability.

Here’s how it works

- Ethical Goal Setting

Organizations define what “ethical” means in their context, mapping it to use cases, regulations, and societal expectations.

- Risk and Bias Assessment

AI development teams evaluate datasets and model architecture for embedded bias or safety risks before deployment.

- Embedding Governance Mechanisms

AI transparency, auditability, and human-in-the-loop design are included in the architecture to allow oversight and control.

- Continuous Monitoring and Validation

Post-deployment, the system is monitored for behavior drift, fairness breakdowns, or non-compliance, enabling retraining and course correction.

- Cross-Functional Alignment

Ethics, legal, technical, and business teams collaborate to ensure the AI aligns with corporate policy, public expectation, and legal mandates.

There are various types of ethical AI by design approaches:

• Rule-Based Compliance Frameworks: Systems hardcoded with guardrails based on industry-specific ethical and legal regulations.

• Explainable AI (XAI) Models: Architectures that prioritize interpretability, allowing users and regulators to understand how decisions were made.

• Human-in-the-Loop Design: Ensures critical decisions, such as those in insurance or education, require human validation before execution.

• Value Alignment Algorithms: Models trained using reinforcement learning or constitutional AI to align with societal or organizational values.

• Pre-Deployment Certification Models: Frameworks that require ethical risk scoring, documentation, and third-party review before release.

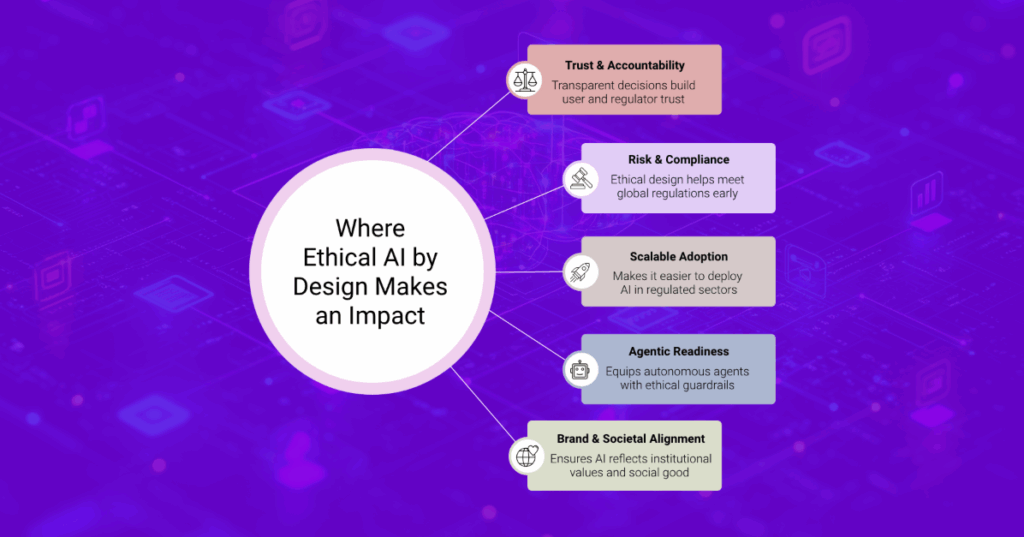

Why It Matters

1. Builds Trust and Accountability at Scale

Ethical AI by design ensures AI systems make decisions that are fair, transparent, and aligned with human values, which is crucial for enterprise-wide adoption. In financial services and insurance, where algorithmic decisions impact loans, credit, and claims, embedding AI accountability and explainable AI helps build user and regulator trust while reducing litigation risks.

2. Minimizes Legal and Regulatory Risk

By embedding AI ethics and compliance from the outset, enterprises can preempt violations of emerging regulations like the EU AI Act or industry-specific frameworks. For eCommerce and consumer products, this means their personalization or recommendation engines can remain innovative without triggering scrutiny for discrimination or unfair targeting.

3. Enhances AI Adoption in Regulated Industries

Many sectors struggle to scale AI due to concerns over bias and opacity. By proactively integrating trustworthy AI systems and ethical AI frameworks, higher education institutions and financial firms can confidently roll out AI tutors, admissions tools, or fraud detection systems while meeting internal and external ethical standards.

4. Future-Proofs Agentic AI Workflows

Autonomous agents must make decisions without direct human oversight, making design-stage ethics essential. In industries like insurance and CPS, where agent-led automation is growing, responsible AI implementation ensures that agents act within transparent, legally defensible boundaries, thereby safeguarding brand equity and customer loyalty.

5. Aligns AI with Institutional Values and Social Good

Ethical AI by design helps organizations match technology deployment with brand principles and societal expectations. In higher education, this may mean ensuring AI tutors adapt to student diversity without bias; in consumer products, it may mean marketing agents operate with fairness, inclusion, and accessibility by default.

Adoption Trends and Real-World Examples

As AI systems become more deeply integrated into enterprise workflows, the need for ethical oversight is no longer theoretical. According to PwC’s 2023 Emerging Technology Survey, 73% of U.S. companies have already adopted AI in some form, underscoring the urgency of embedding ethical safeguards early in the development cycle. Meanwhile, the Capgemini Research Institute reports an 800% increase in the number of organizations that have formalized AI ethics charters, from just 5% in 2019 to 45% by 2020, signaling growing institutional awareness. However, public expectations are outpacing enterprise maturity: a study by the IBM Institute for Business Value and Oxford Economics found that 85% of consumers want ethics factored into AI use, while only 75% of executives consider it a priority.

This growing emphasis on responsible AI development is already shaping enterprise strategy, as shown in the following four real-world applications:

• IBM Watson OpenScale

IBM’s enterprise-grade AI management platform offers tools for bias detection, explainability, and ongoing monitoring, helping financial and healthcare organizations meet governance requirements and improve transparency in algorithmic decisions.

• Microsoft Responsible AI Standard

Microsoft’s internal development framework ensures that AI products across Office, Azure, and GitHub follow clear ethical design principles. This includes fairness reviews, harm mitigation steps, and transparency checkpoints during model development and deployment.

• Unilever’s Responsible AI Principles

In the CPS sector, Unilever has formalized guidelines to govern AI used in marketing, consumer insights, and supply chain analytics. Their internal board ensures that AI solutions align with brand values, are explainable, and respect data privacy by design.

• FD Ryze

FD Ryze embeds ethical AI by design across its agentic ecosystem, enabling enterprises in insurance, eCommerce, higher education, and more to deploy AI agents with explainability, consent-aware processing, and embedded compliance checks. FD Ryze helps clients operationalize AI ethics without slowing down innovation.

What Lies Ahead

1. AI Ethics Will Be Codified into Industry Standards

As regulations like the EU AI Act and India’s Digital India Bill mature, ethical design will become a baseline requirement rather than a competitive differentiator. Financial services and insurance firms will need to integrate AI ethics and compliance into internal audit and product design cycles to avoid legal exposure and reputational damage.

2. Agentic AI Will Demand Embedded Ethical Logic

With the rise of autonomous, self-directed agents, enterprises can no longer rely on surface-level fairness checks. In eCommerce and consumer products, agentic AI must be pre-programmed with policy awareness, explainability, and escalation pathways to ensure it behaves responsibly in real time, even without human oversight.

3. AI Ethics Teams Will Shift from Advisory to Operational

Ethics will move out of standalone committees and into integrated product teams, influencing technical design, data workflows, and ML operations. In higher education, this means academic AI tools will be evaluated for fairness and accessibility as part of the development lifecycle; in insurance, product design teams will embed risk-mitigation logic directly into quote engines and claims tools.

4. Ethical AI Will Influence Customer Loyalty and Brand Equity

Consumers are growing more aware of algorithmic harm and misuse. In sectors like consumer products and financial services, transparent and fair AI behavior will directly impact customer trust, user retention, and long-term brand loyalty, particularly for Gen Z and regulatory-conscious buyers.

5. Third-Party AI Certification Will Become a Market Expectation

External validation for trustworthy AI systems via independent audits or public benchmarks will emerge as a de facto requirement. Enterprises in insurance, education, and eCommerce will be expected to show that their AI systems are not only technically sound, but ethically certifiable, especially when deployed at scale.

Related Terms

- Responsible AI

- Explainable AI (XAI)

- AI Regulatory Compliance

- Model Auditing

- AI Transparency

- Ethical AI Frameworks