Behind every emotionally intelligent response is a human decision. This piece explores who writes the lines, and what happens when they scale.

A customer logs in to report the death of a loved one. They’re here to initiate a life insurance payout, one of the most emotionally loaded transactions a person can make.

The agent that greets them isn’t a person. It’s a digital interface designed for efficiency, programmed with polite empathy cues and streamlined options. It knows the steps. It knows the scripts.

But what it doesn’t know—what it can’t truly feel—is the moment.

And for the person on the other side, this moment matters more than the outcome. The tone of response. The pacing of questions. The ability to sense hesitation or distress. These are the human cues they’re hoping will show up in a machine.

In another part of the world, a university student reaches out through their institution’s support portal. A parent’s illness has upended their finances, and between part-time shifts and coursework, they’re slipping behind. They’re not lodging a formal complaint or making a clear request. They are, however, signaling overwhelm.

An AI-driven student assistant routes their message through the right workflow. It offers a financial aid link and a supportive-sounding line: “We’re here to help you stay on track.”

It says the right things. It (almost) feels like it cares.

So the question isn’t whether AI can care.

It’s who defines empathy in a system that can’t feel, and why we keep building intimacy into machines that will never need it.

Empathy Isn’t Discovered. It’s Designed.

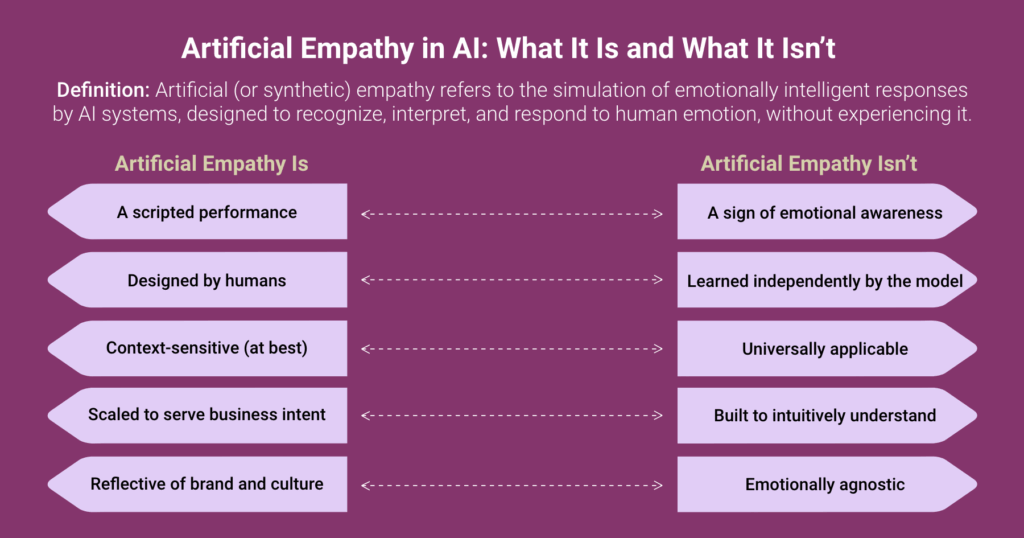

When AI appears empathetic, it’s tempting to believe it’s evolving toward something human. That after enough exposure to our conversations, our expressions, our language, it’s finally starting to understand us. But there is no understanding, not really. What we’re experiencing isn’t emotional intelligence emerging on its own. It’s a simulation, crafted deliberately, layer by layer.

Everything that feels like care in an AI interaction is the result of structured design. The tone of voice, the choice of words, the scripted response to distress. None of it happens by accident. A developer tuned the model to detect sentiment. A content strategist selected phrases that would land gently. A product team aligned the response style with the brand. A compliance lead reviewed the outputs for risk. These choices, distributed across roles and tools and timelines, shape the experience of empathy we believe we’re receiving.

This doesn’t make synthetic empathy inauthentic. It makes it intentional. The agent didn’t learn to care, but it was built to appear as though it does. The emotional fluency we experience in a chatbot or virtual assistant is less about the model’s intelligence and more about the intent behind its construction.

And that intent matters. Because the words we give these systems, and the tone in which they speak, carry emotional weight, whether or not the system understands the meaning behind them. When we build machines that sound like they care, users will respond as though they do. That’s not an interface decision. That’s a human responsibility.

When Empathy Becomes a Template

Empathy in AI may be designed by people, but once it’s deployed, it begins to operate at a different scale entirely. A phrase approved in a workshop or tested in a user survey, “We’re here for you” or “That must be difficult,” can quickly become the default across thousands of interactions. The same sentiment, the same cadence, repeated in call centers, chatbots, apps, and automated emails. It’s polished. Familiar. Comforting, even. But it’s not personal.

This is the paradox of synthetic empathy at scale. As we expand the reach of emotionally intelligent systems, we often lose the very nuance that empathy depends on. When the same phrasing is used to respond to vastly different experiences—academic stress, financial anxiety, grief, frustration—it begins to blur the lines between understanding and standardization. What feels like support in one context can feel hollow, or even patronizing, in another.

The issue isn’t that these systems are cold or careless. In fact, they’re often quite effective at mirroring the tone of compassion. But tone is not the same as truth. And the more we rely on that tone to carry emotional weight, the more we risk delivering empathy as a service layer. Something consistent, brand-safe, and risk-managed, but ultimately disconnected from the complexity of individual experience.

At enterprise scale, synthetic empathy becomes more about achieving a desired effect than cultivating real emotional insight. It’s not inherently harmful. But it isn’t necessarily right either. And when we treat care like a template, we start to miss the moments that matter most. Not because the system failed, but because it performed exactly as designed.

When Machines Mirror More Than We Mean

Empathy, even when artificial, has influence. A well-timed phrase can soften frustration. A supportive tone can build trust. But when emotional response is automated, designed to be scalable, repeatable, and brand-aligned, it comes with risks that aren’t always easy to see.

A digital agent that echoes user frustration might inadvertently escalate tension. One that affirms emotion too freely may encourage avoidance rather than resolution. And when the emotional tone is trained on narrow cultural norms, what feels like empathy in one region might feel performative, invasive, or simply wrong in another.

These aren’t system failures. They’re design decisions that worked as intended, but not always as needed. That’s the challenge. When empathy is embedded into software, it no longer reflects a personal instinct. It reflects an organization’s chosen version of care, applied at scale. It speaks in the right tone, at the right moment, across thousands of interactions. But it doesn’t always understand when the tone no longer fits the context.

So what does responsible design look like?

It doesn’t mean forcing machines to feel. It means recognizing where simulation ends and trust begins. It means knowing when emotional response is helpful, and when it’s better to pause, redirect, or escalate. And it means treating synthetic empathy not just as a feature to refine, but as a mirror: one that reflects the values, assumptions, and blind spots of the people who built it.

Every empathetic gesture from an AI system is received as intentional. And in a way, it is. Because someone, somewhere, made a choice about how that system should sound, what it should say, and when it should care.

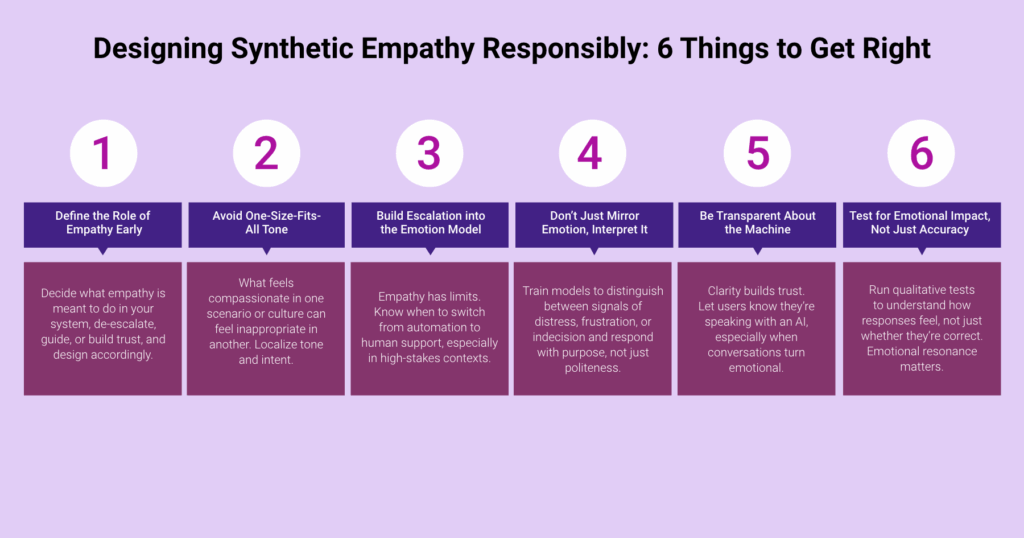

What we’re looking to implement aren’t abstract design ideals. Instead, these are real questions that product teams wrestle with daily. At Fulcrum Digital, we realized that small choices in tone or timing can change how an interaction is felt. Here are a few principles that guide responsible empathy in our work:

Machines will never feel the weight of the moments they’re designed to carry.

That weight stays with us. The ones who chose the tone, scripted the comfort, and sent it out into the world.