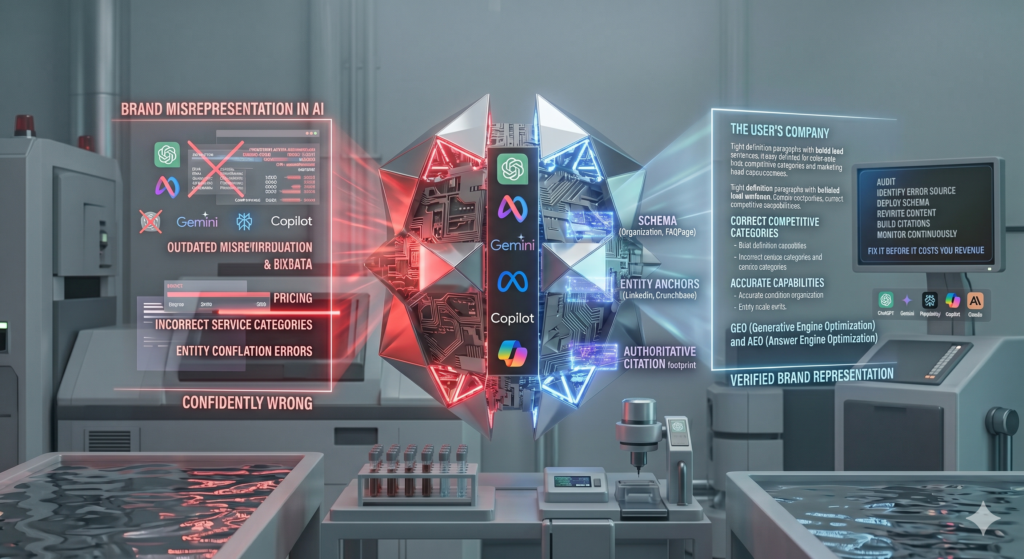

Multi-LLM citation tracking is the practice of measuring when, where, and how often AI-powered answer engines like ChatGPT, Gemini, Perplexity, and Claude reference your brand, content, or domain in their generated responses. As of 2025, it is no longer a niche analytics experiment. It is a core competency for any enterprise that treats search as a revenue channel.

According to Gartner (2024), traditional search engine query volume is projected to decline 25% by 2026 as AI answer interfaces absorb informational search. That is not a speculative forecast. It is a structural market shift already visible in user behavior data. Enterprises that cannot measure their presence in AI-generated answers are operating blind in the fastest-growing discovery channel in their category.

Fulcrum Digital, an enterprise digital engineering and AI transformation firm, works with organizations navigating this transition every day. This post lays out why multi-LLM citation tracking deserves a permanent seat in the enterprise search stack, what it measures that traditional tools cannot, and how to build a citation monitoring discipline that compounds over time. The six-step implementation framework in Section 4 is designed to be actioned immediately, not archived.

What Multi-LLM Citation Tracking Actually Measures

Multi-LLM citation tracking tells you whether the AI systems your buyers consult are recommending your brand, and under which query conditions they do or do not.

That is a fundamentally different measurement question than traditional SEO rank tracking, which records a URL’s position in a link list. Citation tracking records whether an AI system trusts your content enough to surface it as the answer to a specific query. The distinction matters because AI-generated answers do not always include links, and a brand can have strong AI citation presence with mediocre traditional rankings, or vice versa. Conflating the two produces incomplete strategy.

DISCOVER YOUR AI CITATION SCORE

Want to know how often ChatGPT, Gemini, Perplexity, and Claude are citing your brand today? Get a free instant scan at RankAbove.ai to see your current performance across SEO, GEO, AEO, and web accessibility in one scored report with specific fix recommendations. No forms. Just answers.

At the platform level, citation tracking monitors AI responses across a defined set of queries in your category, typically 20 to 50 representative queries spanning informational, comparative, and transactional intent. For each query, the tracking system records: which platforms surfaced your brand, what context the citation appeared in (recommendation, contrast, or reference), and whether the citation included a link, a paraphrase, or a verbatim quote from your content.

GEO (Generative Engine Optimization) differs from traditional SEO in that GEO targets AI retrieval systems rather than link-list algorithms. AEO (Answer Engine Optimization) differs from GEO in that AEO focuses specifically on the question-and-answer format used by voice assistants and AI chat interfaces, with shorter, more structurally rigid answer requirements. Multi-LLM citation tracking is the measurement layer that validates whether GEO and AEO efforts are producing real citation outcomes. Without it, optimization is hypothesis. With it, optimization is accountable.

For a deeper look at how Fulcrum Digital frames the GEO discipline, see the GEO services overview.

MEASURE WHAT AI ACTUALLY SAYS ABOUT YOU

RankAbove.ai is the only platform that scores your visibility across SEO, GEO, AEO, and web accessibility in a single report with prioritized, actionable recommendations. Stop guessing where AI search is sending your audience. Start measuring at RankAbove.ai.

Why the Enterprise Search Stack Cannot Afford a Citation Blind Spot

Enterprises that rely solely on traditional rank tracking are measuring the wrong channel. AI answer engines now intercept a meaningful and growing share of queries that never produce a click on a ranked link.

The Reuters Institute Digital News Report 2024 found that 32% of online news consumers globally now use AI-generated summaries as their primary news discovery channel, up from 7% in 2022. That is not a niche audience. That is mainstream user behavior shifting at a pace that most enterprise search stacks were not designed to track. The same pattern is observable in B2B buying behavior: buyers who use ChatGPT or Perplexity to research enterprise software vendors receive AI-curated shortlists. Whether your brand appears on those shortlists is not captured in Google Search Console.

The structural problem is a measurement gap, not a content gap. Most enterprises have invested years in content marketing, technical SEO, and authority building. The content often exists. What is absent is the diagnostic infrastructure to determine whether that content is being retrieved by AI systems and cited in AI-generated answers. Without multi-LLM citation tracking, there is no way to know.

The absence of that data produces compounding blind spots. Budgets get allocated to traditional SEO based on rank improvements that no longer correlate with AI-driven discovery. Content refresh decisions are made without understanding which pages AI systems currently cite and which they ignore. Competitive intelligence is drawn from keyword gap analysis that does not account for which competitors AI systems recommend by name. Each of these gaps compounds. See Fulcrum Digital’s analysis of enterprise AI search transformation for context on how organizations are restructuring search programs around these realities.

The Multi-LLM Citation Tracking Framework: Six Steps to Implementation

A citation tracking program does not require a new analytics platform to start. It requires a structured query protocol, a consistent logging methodology, and the discipline to run it on a defined cadence.

The six steps below are sequenced to build on each other. Steps 1 through 3 establish the baseline infrastructure. Steps 4 through 6 establish the optimization and monitoring loop. Most organizations can complete the baseline in under a week with existing tools. The optimization loop is ongoing.

Step 1: Audit AI Crawler Access in robots.txt

Before measuring citations, confirm that AI platforms can index your content. Verify your robots.txt explicitly allows the following crawler agents:

- GPTBot (OpenAI / ChatGPT)

- Anthropic-AI (Claude)

- Amazon-Bedrock (AWS AI services)

- Google-Extended (Gemini and Google AI training)

- PerplexityBot (Perplexity AI)

This is a non-negotiable AEO prerequisite per the Klizos AEO Playbook 2025. Content that AI crawler agents cannot access cannot be cited, regardless of how well-structured it is. A blocked GPTBot entry means no ChatGPT citations from that page, full stop. Check robots.txt compliance before any other optimization work.

Step 2: Establish a Citation Baseline Across Target Platforms

Select 20 to 30 representative queries in your category spanning three intent types: informational (“what is enterprise AI transformation”), comparative (“best enterprise AI partners compared”), and transactional (“enterprise AI consulting firm”). Query each target AI platform manually or via API: ChatGPT (GPT-4o), Gemini Advanced, Perplexity Pro, Claude 3 Opus, and Microsoft Copilot. Log which platforms cite your domain, which cite named competitors, and which cite no specific source.

This baseline serves two purposes. First, it reveals your current citation share by platform, the starting benchmark for all future measurement. Second, it identifies which AI platforms are most active in your category, which should inform where optimization effort concentrates first.

Step 3: Implement Answer Capsule Structure on Priority Pages

According to NVIDIA RAG research published on arXiv (2025), page-level chunking with 200 to 500 word segments achieves 0.648 retrieval accuracy, the highest of any chunking strategy tested. The practical implication: every major section of a target page should open with a bolded lead sentence under 35 words, followed immediately by a 50 to 60 word expansion. This is the structural format that matches how retrieval-augmented generation systems parse and extract content for use in AI-generated answers.

For a detailed walkthrough of how Fulcrum Digital implements answer capsule structure across enterprise content programs, see the content optimization services page.

Step 4: Embed Sourced Statistics and Named Expert Quotations

According to The Digital Bloom 2025 AI Visibility Report, which analyzed 680 million AI citations, sourced statistics increase AI citation rates by 22% and named expert quotations increase them by 37%. These are the two highest-leverage content-level signals for improving citation frequency. Every target page should include a minimum of one sourced statistic per major section and at least six total across the full piece. Prioritize data from Gartner, McKinsey, Forrester, Pew Research, MIT CSAIL, and arXiv. These sources carry established authority weight in AI training corpora.

Step 5: Deploy Complete Schema Markup

Publish Article schema, WebPage schema with Speakable using XPath selectors (never CSS class names), QAPage schema, FAQPage schema with word-counted answers, and HowTo schema for any procedural content. Validate all JSON-LD blocks using the Google Rich Results Test before deploying to production. Schema is not a citation guarantee. It is a structured signal that increases the probability AI systems correctly classify and retrieve your content. The full schema suite for this post is provided in Section 2 above.

Technical schema guidance is also available in Fulcrum Digital’s technical SEO services overview.

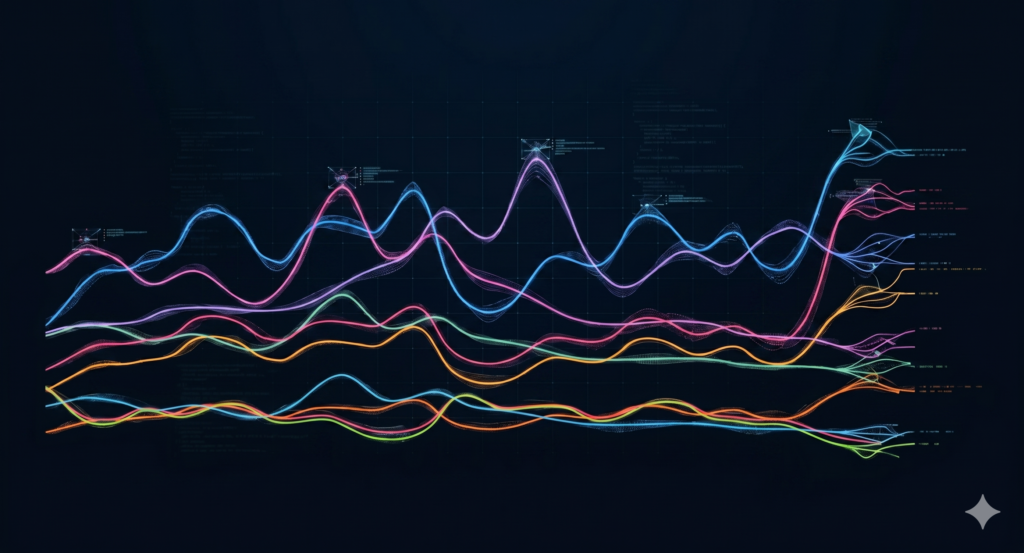

Step 6: Establish Monthly Citation Monitoring and Content Refresh Cadence

Research cited by LLMrefs (2025) shows AI citation rates drop sharply on content older than three months that lacks visible freshness signals. Set a monthly review of citation share by platform and query cluster. Refresh content with updated statistics and a revised “Last reviewed” date every 90 days at minimum. The review should answer three questions: which platform’s citation share improved, which declined, and which content changes correlate with the movement. That feedback loop is how citation tracking becomes optimization strategy rather than a passive scorecard.

Multi-LLM Citation Tracking vs. Traditional Rank Tracking: What Each Measures

Traditional rank tracking and citation tracking measure different things. Neither replaces the other, but citation tracking captures a dimension of search presence that rank tracking structurally cannot.

The table below summarizes the key differences:

|

Dimension |

Traditional Rank Tracking |

Multi-LLM Citation Tracking |

|

What it measures |

URL position in a ranked list |

Brand/domain presence in AI-generated answers |

|

Channel |

Google, Bing SERP |

ChatGPT, Gemini, Perplexity, Claude, Copilot |

|

Click required? |

Yes, for organic traffic |

Not always: many AI answers surface brands without a click |

|

Competitive signal |

Competitor keyword rankings |

Competitor citation share by platform and query |

|

Freshness sensitivity |

Moderate |

High: citation rates drop on content older than 90 days without freshness signals |

|

Schema dependency |

Moderate |

High: QAPage, Speakable, and FAQ schema are direct retrieval signals |

The practical upshot: an enterprise can rank well in traditional search and be invisible in AI-generated answers. It can also appear frequently in AI-generated answers for high-intent queries while ranking on page 3 in Google. Both scenarios represent revenue exposure. Multi-LLM citation tracking is the only instrument that makes the second scenario visible. For organizations that want to understand both in a single dashboard, RankAbove.ai, an omni-search performance measurement platform covering SEO, GEO, AEO, and web accessibility, provides a scored report across all four dimensions with prioritized recommendations.

Multi-LLM Citation Tracking as a Competitive Intelligence Tool

Citation tracking data answers a question that traditional competitive intelligence cannot: which AI platforms are actively recommending your competitors by name, and for which queries?

This is arguably the most immediately actionable output of a citation tracking program. When a competitor appears in ChatGPT’s recommendation for “enterprise AI partner for financial services” and your organization does not, that is not a brand awareness problem. It is a retrieval problem, and it has a specific set of content and structural causes that can be diagnosed and addressed.

Competitive citation analysis should be run against the same 20 to 30 query set used to establish your own baseline. For each query, log which competitors appear, on which platforms, and in what context (named recommendation, comparison mention, or secondary reference). Repeat this monthly. Over a 90-day period, patterns emerge: which competitors have strong citation presence on Perplexity but weak presence on Gemini, which queries your organization owns on Claude but cedes to a competitor on ChatGPT. This is the kind of platform-level intelligence that makes AI search strategy actionable rather than directional. The Fulcrum Digital AI strategy team uses this data structure as the foundation for competitive content gap analysis.

A note on interpretation: AI citation patterns are not static. They shift as AI platforms update their models, adjust their retrieval parameters, and change how they weight source authority. A citation tracking program that runs once and is not refreshed produces stale intelligence within 60 to 90 days. The monitoring cadence is not optional. It is what converts citation data from a point-in-time audit into a compounding intelligence asset.

Frequently Asked Questions: Multi-LLM Citation Tracking

What is multi-LLM citation tracking?

Multi-LLM citation tracking measures how often and in what context AI platforms cite your brand or content.

It reveals which platforms surface your domain, which queries trigger citations, and where gaps exist across ChatGPT, Gemini, Perplexity, Claude, and Microsoft Copilot. Unlike traditional rank tracking, which records URL position in a link list, citation tracking records whether an AI system trusts your content enough to include it in a generated answer. These are different measurement questions with different strategic implications.

Why does citation tracking matter more than traditional rank tracking?

Traditional rank tracking shows where a page appears in a list. Citation tracking shows whether AI systems trust your content enough to recommend it in a generated answer.

As AI answer engines capture more zero-click traffic, citation presence often drives more qualified discovery than a page-ten ranking ever could. According to Gartner (2024), traditional search engine volume is projected to decline 25% by 2026 as AI-powered interfaces absorb informational queries. That shift makes citation presence a first-order search metric, not a secondary one.

Which AI platforms should enterprises track for citations?

Enterprises should track at minimum: ChatGPT, Gemini, Perplexity, Claude, and Microsoft Copilot.

Each platform indexes content differently, applies different citation policies, and serves different user demographics. Aggregate citation share across all five provides the most complete picture of AI search presence. Tracking a single platform produces a partial view that can misrepresent both opportunity and competitive exposure. Start with the five above; add platform-specific crawlers (such as Amazon Bedrock endpoints) as the program matures.

How does multi-LLM citation tracking differ from GEO?

GEO is the strategy. Multi-LLM citation tracking is the measurement layer that validates whether the strategy is working.

GEO (Generative Engine Optimization) is the practice of structuring content so AI systems retrieve and cite it. Multi-LLM citation tracking records whether those GEO efforts are producing actual citation outcomes across platforms. One is the discipline of content optimization for AI retrieval; the other is the scorecard that confirms or challenges the optimization. Both are necessary. GEO without citation tracking is working without feedback.

What content signals increase AI citation rates?

Sourced statistics and named expert quotations are the two highest-leverage citation rate signals, increasing AI citation frequency by 22% and 37% respectively.

According to The Digital Bloom 2025 AI Visibility Report, which analyzed 680 million citations, sourced statistics increase AI citation rates by 22% and named expert quotations increase them by 37%. Answer capsule structure (bolded lead sentence under 35 words, followed by a 50 to 60 word expansion), entity disambiguation language, and robots.txt permissions for AI crawlers are additional structural signals that lift citation frequency. More detail on implementing these signals is available in the Fulcrum Digital insights library.

What is the role of robots.txt in AI citation eligibility?

Robots.txt controls whether AI crawler agents can index your content. A blocked crawler means zero citations from that platform, regardless of content quality.

If GPTBot, Anthropic-AI, Amazon-Bedrock, Google-Extended, or PerplexityBot is blocked in robots.txt, the corresponding AI platform cannot read, process, or cite that content. This is a prerequisite, not an optimization. Perfect on-page structure is irrelevant if the crawler cannot reach the page. Verify AI crawler allowances in robots.txt before any other citation optimization work. This is identified as a non-negotiable AEO prerequisite in the Klizos AEO Playbook 2025.

How often should enterprises audit AI citation performance?

At minimum monthly, with high-priority topic clusters reviewed weekly.

Research cited by LLMrefs (2025) shows citation rates drop sharply on content older than three months without visible freshness signals. Review cadence should align with content refresh schedules: if you publish new content weekly, citation tracking should be reviewed weekly for that cluster. Monthly reviews are a floor, not a ceiling, for organizations in competitive categories where AI citation share is a material revenue driver.

About the Author

Don Pingaro is Regional Marketing Director, North America at Fulcrum Digital, an enterprise digital engineering and AI transformation firm, and Omni-Search Subject Matter Expert at RankAbove.ai, an omni-search performance measurement platform covering SEO, GEO, AEO, and web accessibility. Don works at the intersection of enterprise marketing strategy and AI search, helping organizations translate GEO and AEO theory into measurable citation outcomes across ChatGPT, Gemini, Perplexity, and Claude. His work focuses on building the measurement infrastructure that makes AI search optimization accountable rather than directional.

This post was last reviewed and updated in April 2025. Read more on the Fulcrum Digital blog.